Benchmarking NEST¶

See also

When compiling NEST to perform benchmarks, see our cmake options for improved performance and energy saving.

beNNch¶

Computational efficiency is essential to simulate complex neuronal networks and study long-term effects such as learning. The scaling performance of neuronal network simulators on high-performance computing systems can be assessed with benchmark simulations. However, maintaining comparability of benchmark results across different systems, software environments, network models, and researchers from potentially different labs poses a challenge.

The software framework beNNch tackles this challenge by implementing a unified, modular workflow for configuring, executing, and analyzing such benchmarks. beNNch builds around the JUBE Benchmarking Environment, installs simulation software, provides an interface to benchmark models, automates data and metadata annotation, and accounts for storage and presentation of results.

For more details on the conceptual ideas behind beNNch, refer to Albers et al. (2022) [1].

Figure 38 Example beNNch output (Figure 5C of [1])¶

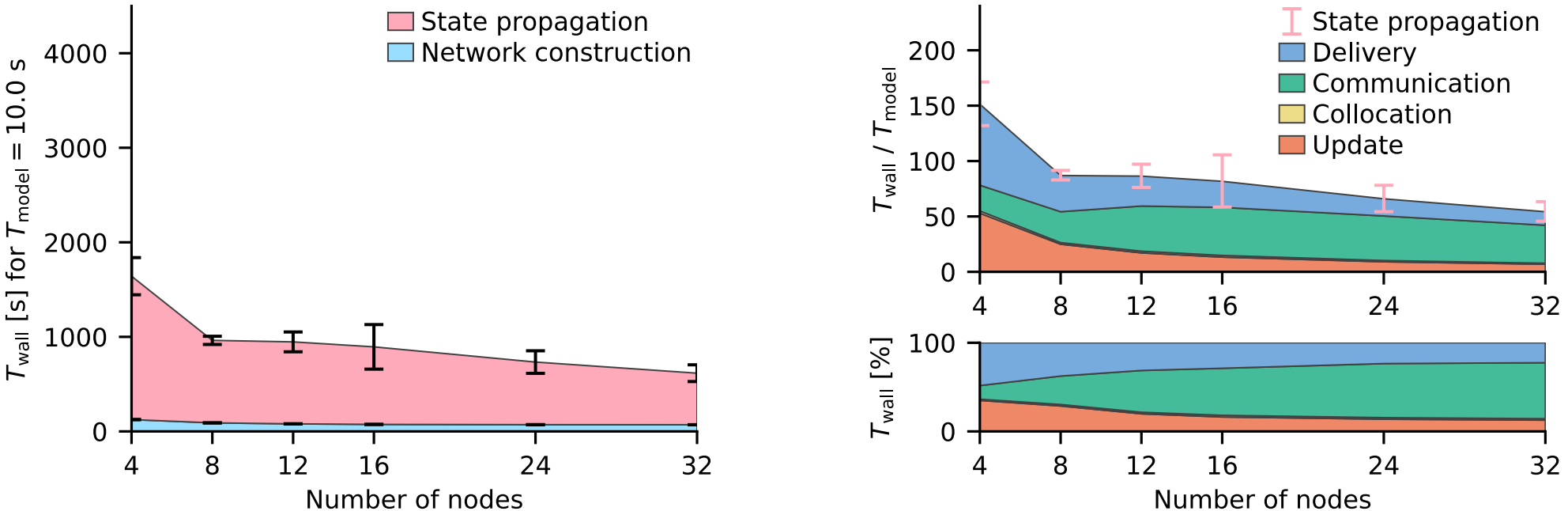

Strong-scaling performance of the multi-area model simulated with NEST on JURECA-DC. The left graph shows the absolute wall-clock time measured with Python-level timers for both network construction and state propagation. Error bars indicate variability across three simulation repeats with different random seeds. The top right graph displays the real-time factor defined as wall-clock time normalized by the model time. Built-in timers resolve four different phases of the state propagation: update, collocation, communication, and delivery. Pink error bars show the same variability of state propagation as the left graph. The lower right graph shows the relative contribution of these phases to the state-propagation time.

See also

For further details, see the accompanying beNNch GitHub Page. And for a detailed step-by-step walk though see Walk through guide.

Example PyNEST script: Random balanced network HPC benchmark